AI-powered tools introduce a different kind of design challenge because they don’t just help people complete tasks; these tools also shape decisions and influence access to services. At the same time, AI-powered tools introduce new risks, like automation bias or misplaced trust.

As a result, traditional user research methods often fall short. One-time usability tests can tell you whether an interface works, but not whether an AI-powered system is appropriate, trustworthy, or aligned with real-world decision-making.

At Nava Labs, we used participant advisory councils (PACs), which are ongoing partnerships with the same group of participants to design and evaluate AI-powered tools in real-world contexts. Here are five reasons this approach is especially valuable for AI user research.

1. AI-powered systems require detailed evaluation, not just usability

Most software is evaluated based on whether someone can complete a task. AI-powered systems differ in that they generate suggestions, rankings, or summaries that influence how people think and make decisions.

This raises new questions:

Does the AI-powered tool support or override professional judgment?

When does a recommendation feel helpful versus intrusive?

How does it affect someone’s confidence in their own expertise?

These are not questions that can be answered in a single research session. By working with the same advisory members over time, we observed how participants’ trust and reliance on the system evolved. We could see when they started to depend on it, when they pushed back, and how they integrated it into their decision-making. This enabled us to evaluate whether the system worked and how it shaped human judgment in practice.

2. Trust in AI is built over time, not in a single session

Trust is central to any AI-powered system, but it doesn’t form instantly. In one-time testing, participants often approach AI-powered outputs with either skepticism or over-trust, neither of which reflects long-term use. PACs allowed us to study trust as it developed over time.

Participants returned session after session, seeing how the system changed and whether their feedback was incorporated. Over time, their responses became more grounded:

They questioned outputs more thoughtfully

They identified edge cases and limitations

They articulated what made the system feel reliable or not

Trust became something we could observe and design for, rather than assume.

3. AI-powered tools must fit real workflows, or they create new friction

AI-powered tools are often positioned as efficiency gains. But if they don’t align with how people actually work, they can introduce new challenges rather than reduce them. This is especially true for decision support systems, where timing, context, and sequence matter.

Through PAC sessions, we were able to see:

When in a workflow, an AI-powered tool is actually useful

Where additional steps slow people down

How we need to structure information to support real tasks

These insights helped us design tools that fit into existing workflows rather than forcing users to adapt to the system. This alignment is critical for AI-powered tools. A well-designed model can still fail if it shows up at the wrong moment or in the wrong format. Additionally, the PAC model is useful here because it’s difficult to fully capture all the details of a person’s workflow in a single research session.

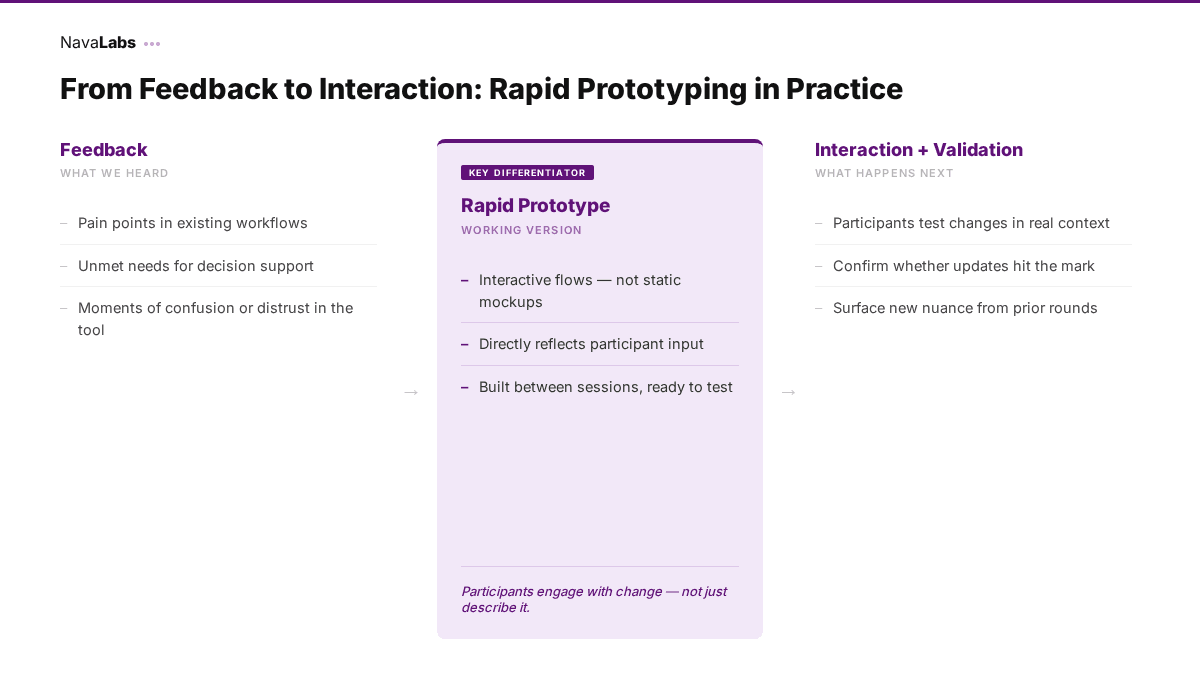

4. Rapid prototyping makes AI-powered behavior tangible and testable

One of the challenges of researching AI-powered solutions is that ideas can remain abstract. It’s difficult for participants to react to descriptions like “the system will recommend resources based on user input” without seeing how that actually plays out.

At key points in our projects, we used rapid prototyping to translate feedback into interactive prototypes for PAC participants. Instead of discussing hypothetical features, we brought back working versions of flows, recommendations, or outputs that met participants’ needs.

A presentation showing how real stakeholder feedback was turned into testable prototypes.

Having prototypes for participants changed the quality of their feedback:

Participants could confirm if the AI-powered outputs matched their expectations

They could identify where the system misinterpreted intent

They could react to real interactions, not imagined ones

This process also reinforced trust. Participants could see how their input shaped the product and test those changes directly. This kind of tangible interaction is essential for AI-powered systems, where behavior can be unpredictable or opaque.

5. PACs surface risks that traditional testing misses

AI-powered systems introduce risks that are easy to overlook in short-term research:

Over-reliance on recommendations

Misinterpretation of outputs

Gaps in data coverage or local relevance

Conflicts between system logic and lived expertise

These issues often don’t appear immediately; they emerge through repeated use and deeper familiarity with the system. Because PAC members engaged with the product over time, they were able to identify these risks more clearly. They pointed out where the system felt overly confident, lacked transparency, and didn’t reflect real-world complexity. This enabled us to address potential issues earlier, before they became embedded in the product experience.

Conclusion

During a visit to Goodwill Central Texas in Austin, Nava Labs worked alongside staff to test ideas in real time, using shared screens and conversation to refine tools based on their daily workflows.

AI-powered systems are often evaluated based on technical performance: accuracy, speed, or scale. But in practice, their success depends on whether people trust them, understand them, and can use them effectively in real-world contexts.

PACs helped us design for that reality by enabling us to build an ongoing dialogue with the people who would actually use the system. This continuity made it possible to design AI-powered tools that support people’s decision-making, rather than disrupting it. For teams building AI-powered products, this approach offers a way to ground innovation in real experience from the start.

Written by

Content strategist