Summary

Project overview

Nava Labs developed and evaluated an artificial intelligence (AI)-powered chatbot designed to help caseworkers more effectively assist clients find and enroll in public benefit programs. This first-of-its-kind study addresses narrowing the gap of over $227 billion in annual unclaimed benefits by improving caseworker capabilities with generative AI (GenAI) technology.

Our solution

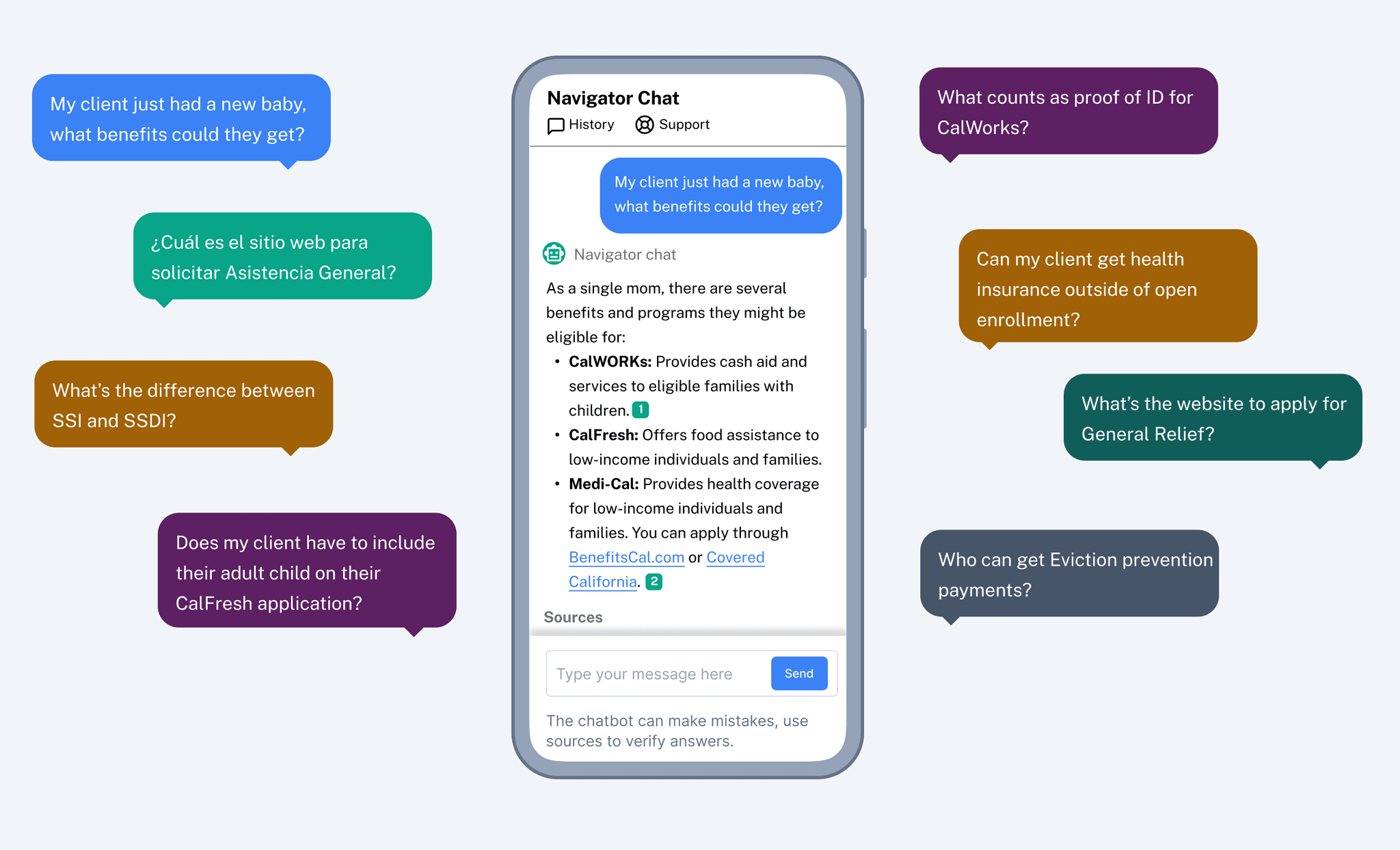

The assistive chatbot explains program rules in plain language, uses retrieval-augmented generation to pull information from pre-vetted sources, provides direct citations, supports multilingual translation, and prevents hallucinations through careful guardrails. We built the technology to integrate with existing workflows via an application programming interface (API) and tested the chatbot in real scenarios with nonprofits and government agencies.

Evaluation methodology

Using an implementation science framework in which we sought to identify factors that affected the uptake of the assistive chatbot in addition to impact outcomes, researchers conducted a mixed-methods evaluation, including:

A randomized controlled trial with 125 caseworkers examining accuracy effects from being shown AI-generated responses to hypothetical client questions developed from real experiences.

A 14-week, real-world pilot with Benefit Navigator, a web-based tool by Amplifi that helps caseworkers navigate benefit and tax credit programs on behalf of benefit-seeking clients, that included 61 caseworkers across six organizations in Los Angeles County and a quasi-experimental design comparing intervention and comparison groups.

A mixed-methods analysis aggregating qualitative and quantitative findings across multiple data sources, including product logs, surveys, and in-depth interviews.

Key findings

Accuracy

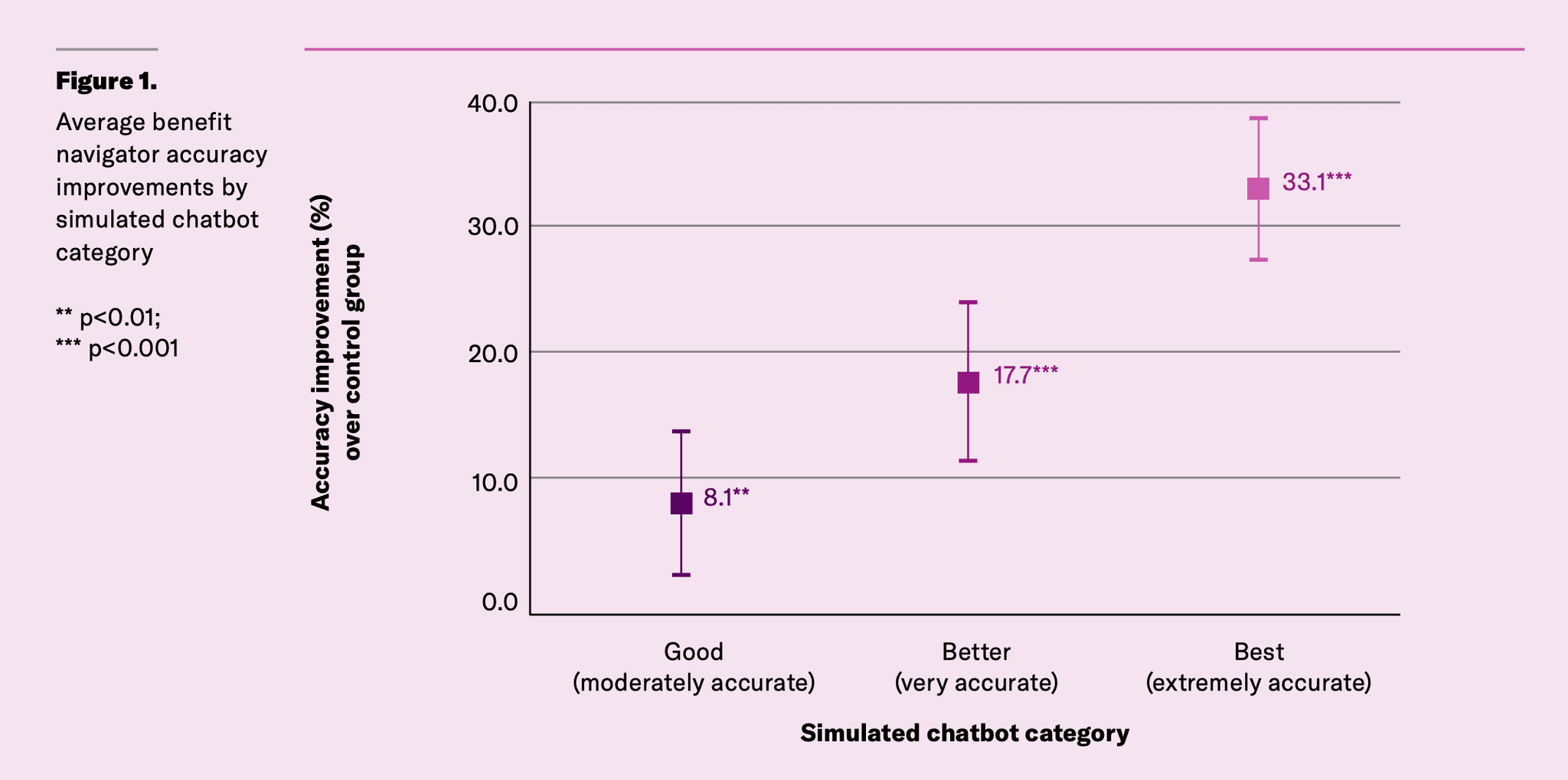

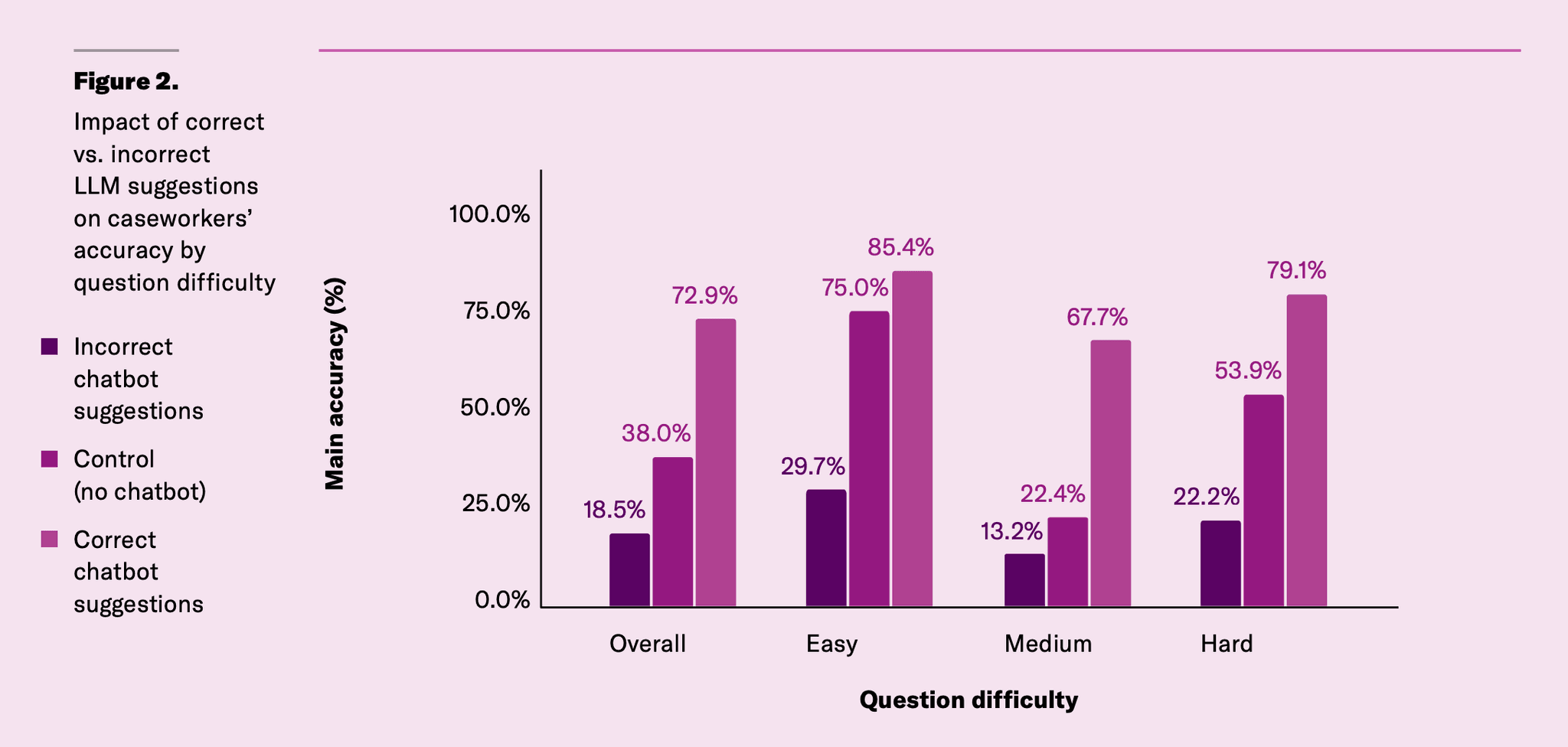

The chatbot is estimated to improve caseworker accuracy by an average of 40% with stronger improvements for more difficult client questions.

Acceptability

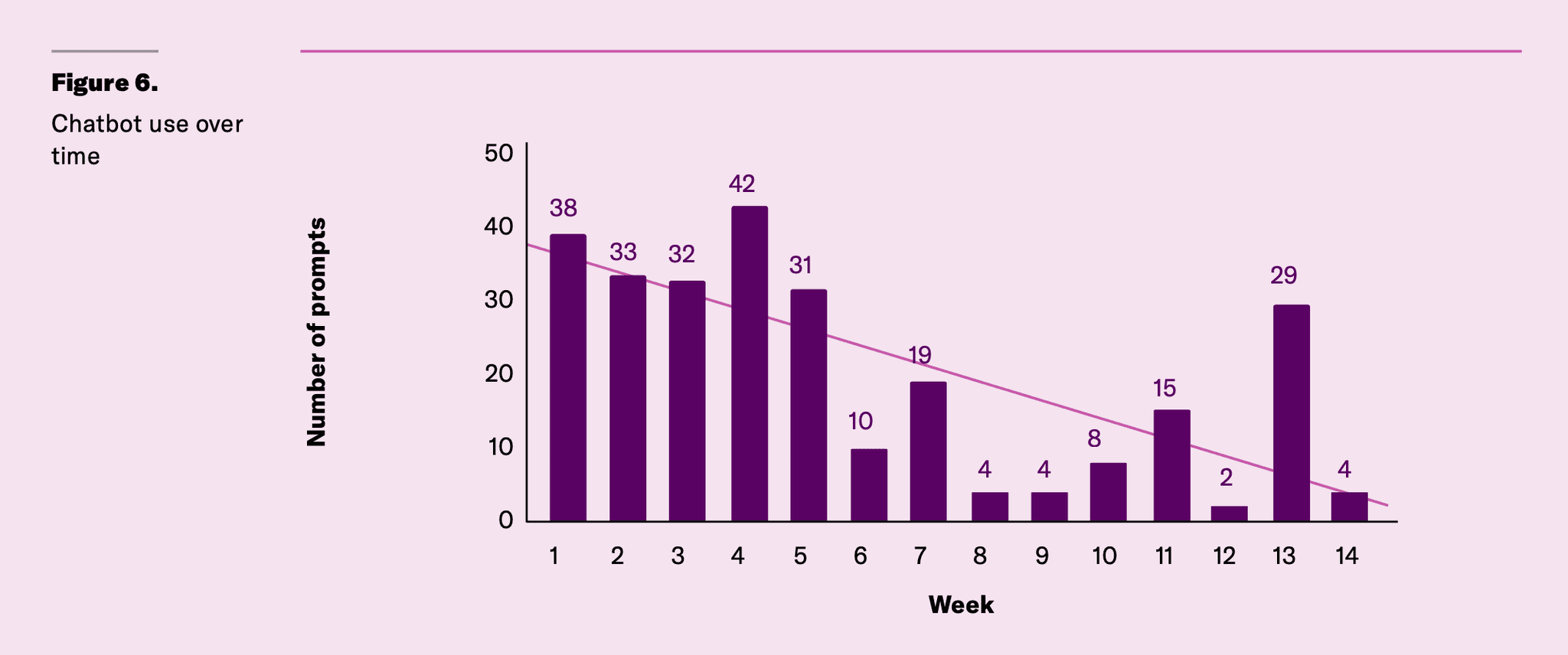

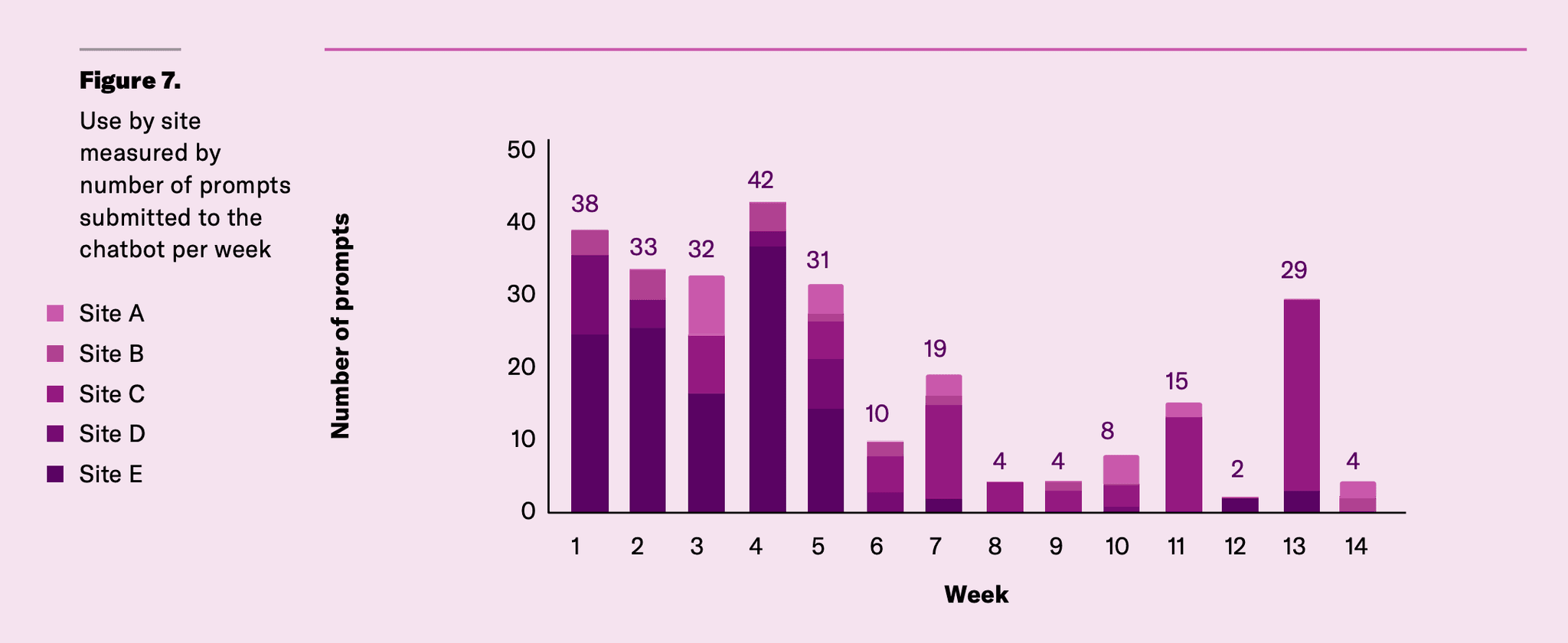

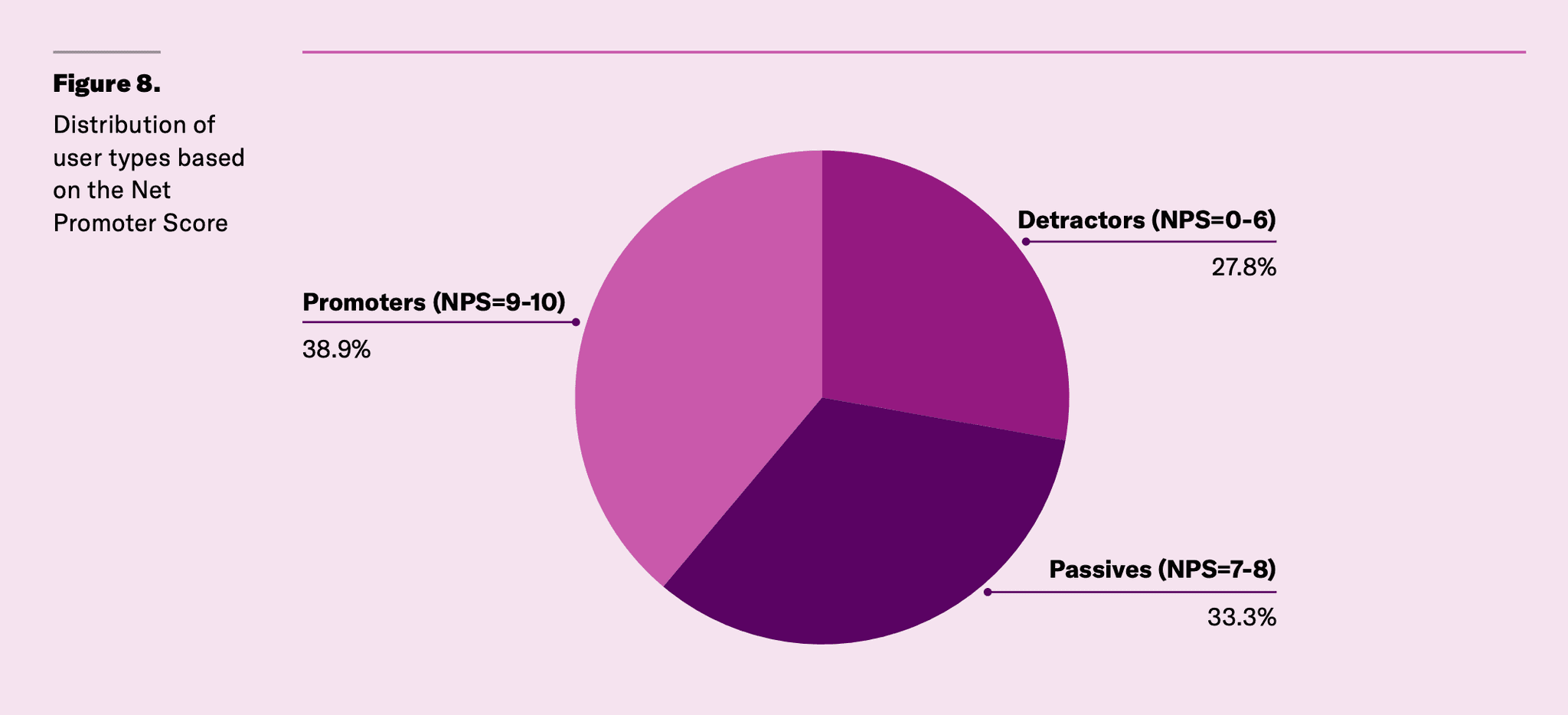

Throughout the pilot, about 65% of caseworkers with access to the chatbot used it, submitting an average of 14 prompts each. However, usage declined over time and varied by site, highlighting the value of sustained engagement strategies. The Net Promoter Score (NPS), a metric used to assess user experience, was 11, indicating moderate average satisfaction. While 40% of respondents were “promoters” meaning they would recommend the chatbot to their colleagues, others expressed less enthusiasm, highlighting mixed adoption and opportunities for improvement.

Administrative burden

Results from the pilot showed promising but inconclusive evidence of reduced learning and psychological costs on caseworkers, though these findings lack statistical significance due to sample size limitations and lower response rate on the endpoint survey.

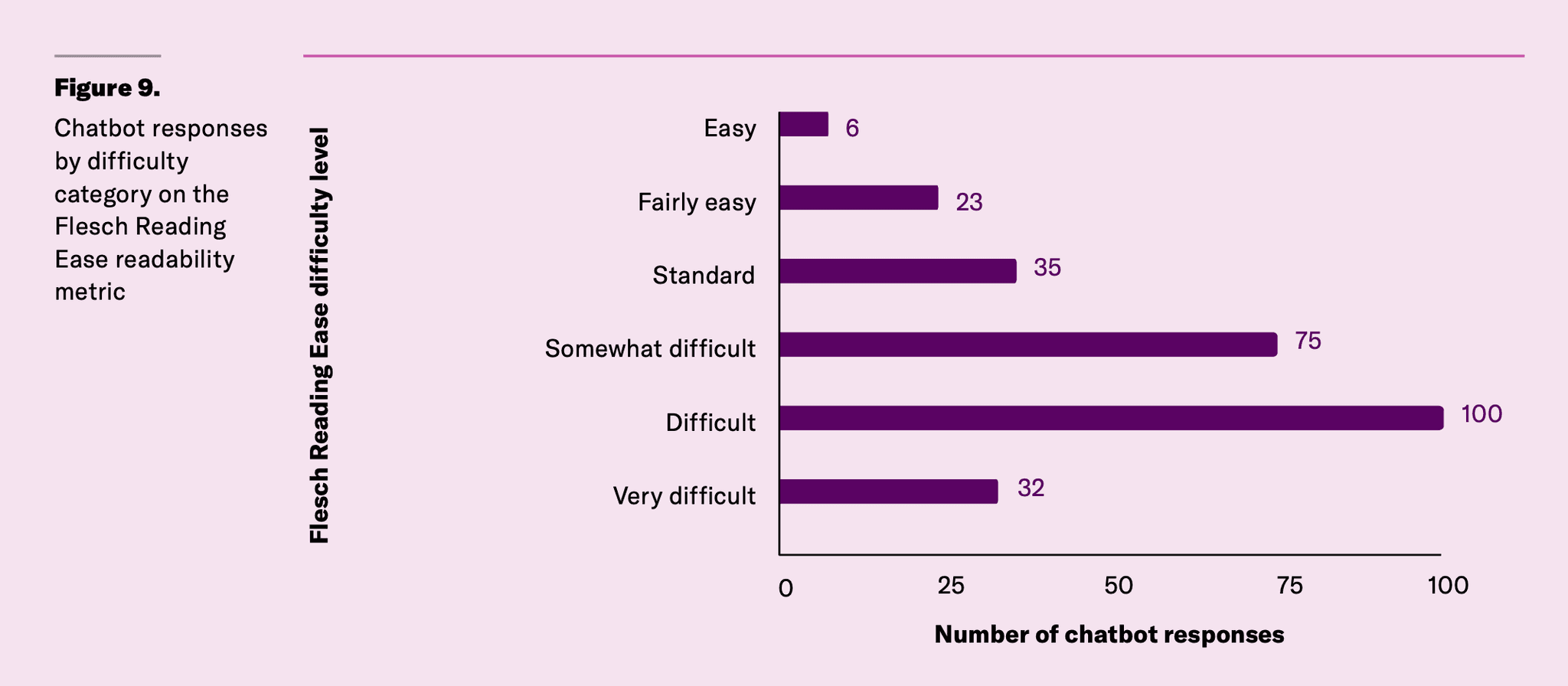

Accessibility

Chatbot responses averaged a 10th to 12th grade reading level — higher than the recommended 8th grade standard but significantly more accessible than source policy manuals, which require college-level reading ability.

Implementation insights

We identified “super users” with high engagement and chatbot usage at sites with active participation in training, consistent organizational reinforcement, and peer support. Service delivery models significantly influenced use patterns, with rapid-intake sites showing higher activity than long-term case management programs.

Implications

This evaluation demonstrates that rigorously tested AI-powered tools can meaningfully improve benefit navigation accuracy, particularly for newer staff. However, successful implementation requires intentional support strategies, ongoing engagement, and attention to accessibility. The findings provide a roadmap for scaling AI-powered tools in public benefit contexts while highlighting critical areas for continued refinement.

Read the full report

Check out the full report to learn more about the Nava Labs AI research program, assistive chatbot, chatbot evaluation design, and outcomes.

About the assistive chatbot

Chatbot functionality

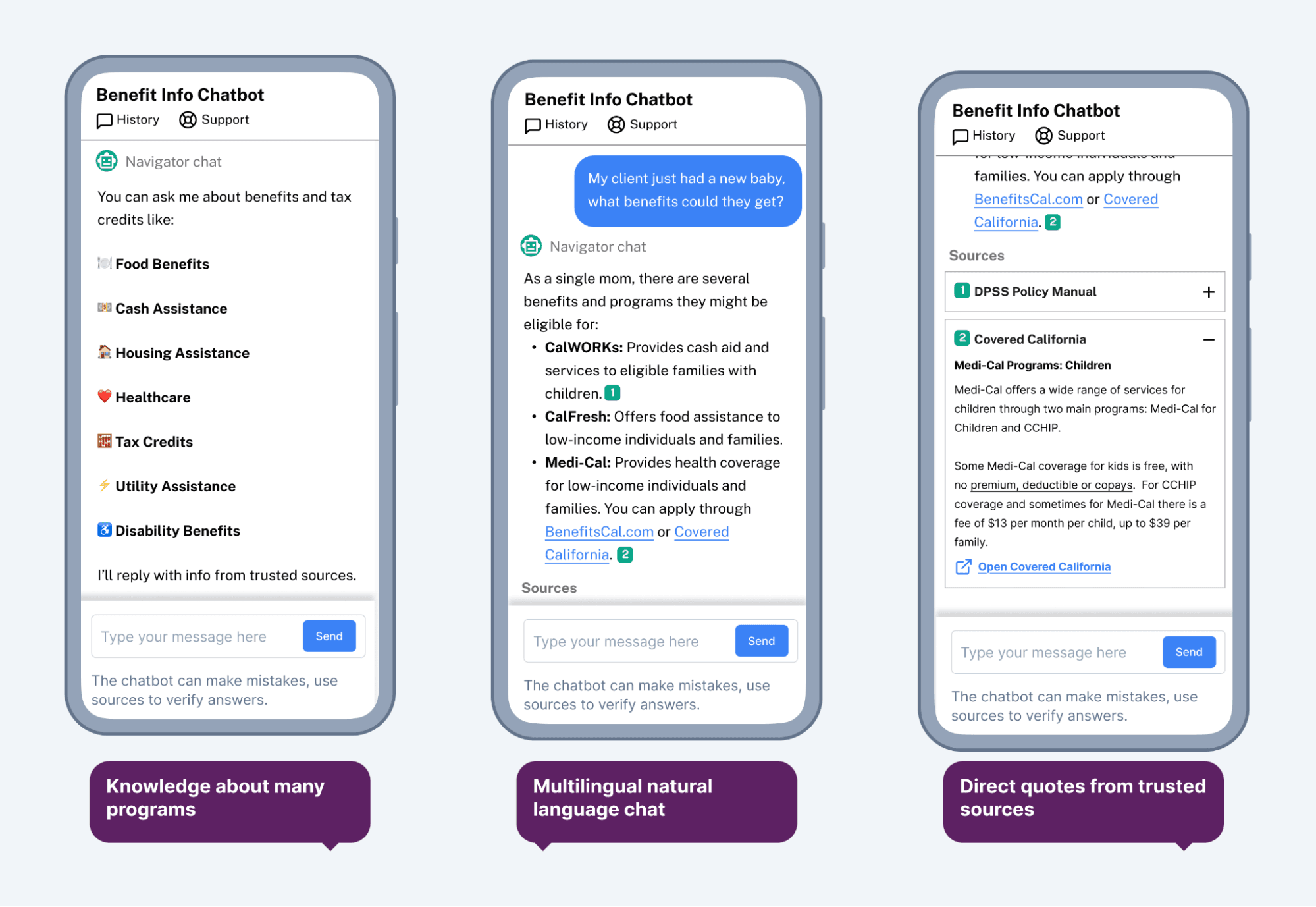

The chatbot aims to make it easier for caseworkers to find credible answers to questions about health and human services program eligibility and enrollment to discuss with their clients in real-time. Nava Labs sought to reduce cognitive load for caseworkers, speed up responses, and build their confidence.

The chatbot solution:

Leverages a foundational Large Language Model (LLM).

Provides plain-language descriptions about program rules.

Uses retrieval augmented generation (RAG) to pull information from pre-vetted sources only.

Provides direct source citations from the pre-vetted sources with links to the original source if further exploration is needed.

Provides multilingual translation support.

Prevents hallucinations with clear guardrails around the chatbot’s scope of knowledge; if a question is out of scope, the chatbot responds “I don’t know the answer” or provides a link to the topic rather than making up a response.

Preparing the chatbot for real-world implementation

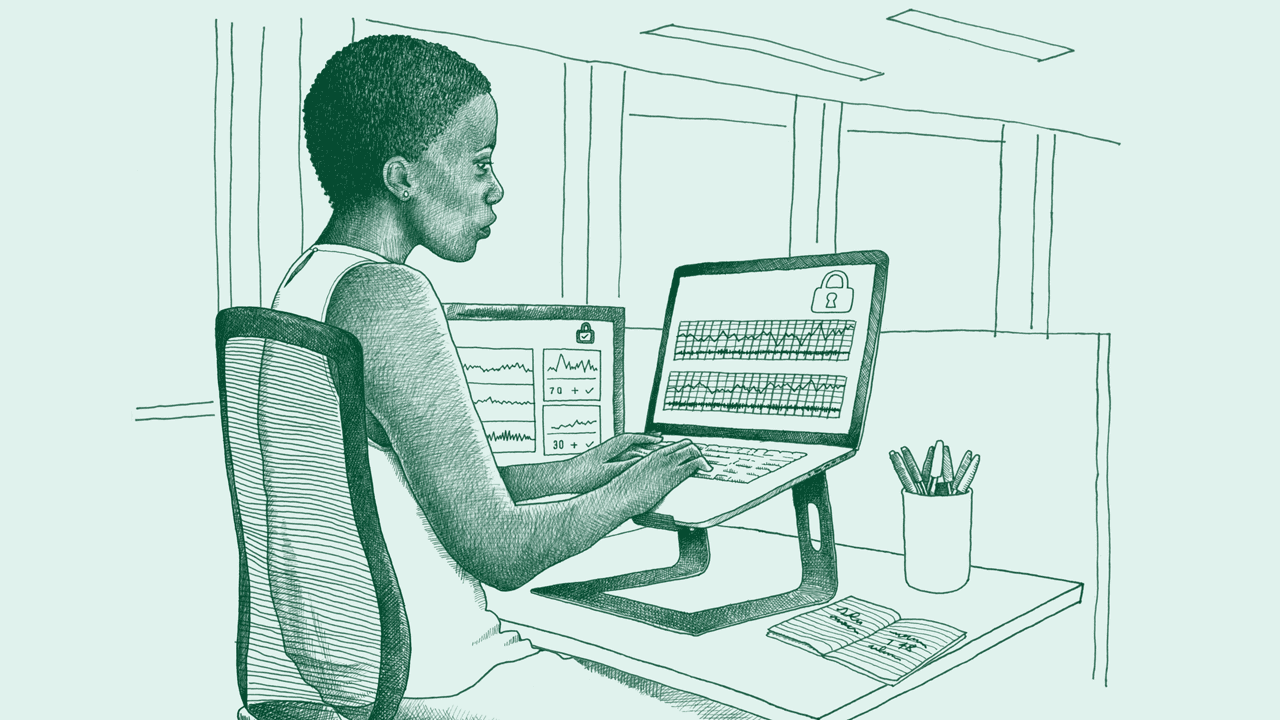

We intentionally built the chatbot technology to adapt to different settings and integrate with a range of different workflows through an application programming interface (API).

The team completed several rounds of prototyping and testing to prepare the assistive chatbot for the pilot with nonprofits and government agencies. Rigorous testing ensures the assistive chatbot is ready to use in real-world settings and collect data on usage and impact. The team iterated on building the solution until technical evaluations signaled high chatbot response accuracy and user testing showcased promise for addressing caseworker needs. The team also confirmed they met all security, privacy, and infrastructure requirements. Then they readied the assistive chatbot to integrate into the workflows of our pilot partner Amplifi, who integrated the chatbot with their Benefit Navigator tool and ensured a seamless user interface across the tools.

Chatbot evaluation design

Read the full report to learn more about the evaluation questions and methods.

Outcomes

1. Measurement and instrument development

Outcome 1.1: We effectively adopted validated measures and instrumentation to study the effects of AI-powered tools in a public benefits context.

2. Accuracy

Outcome 2.1: Use of the assistive chatbot is estimated to improve caseworkers’ accuracy by an average of 40%.

Outcome 2.2: Chatbot accuracy had the largest impact on more difficult client questions.

3. Appropriateness

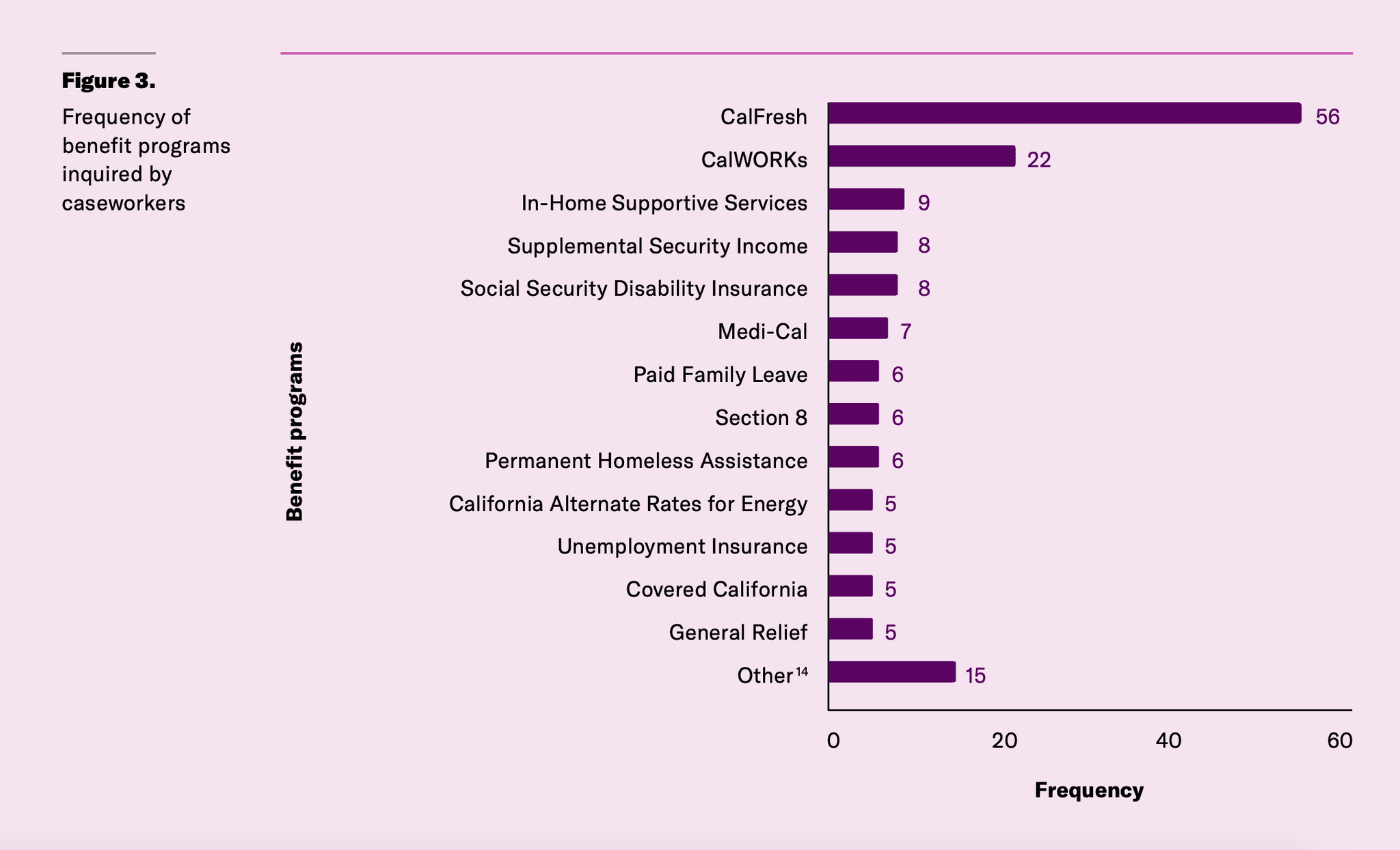

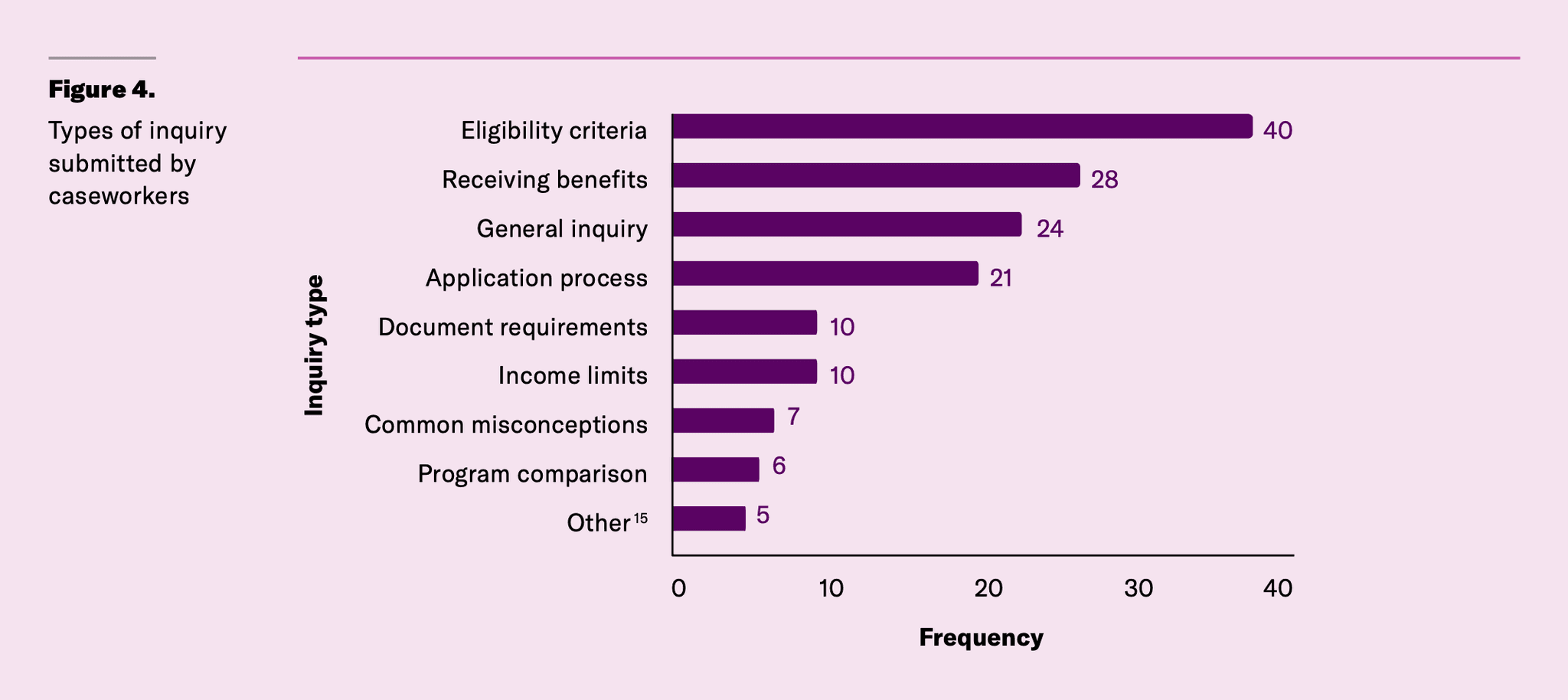

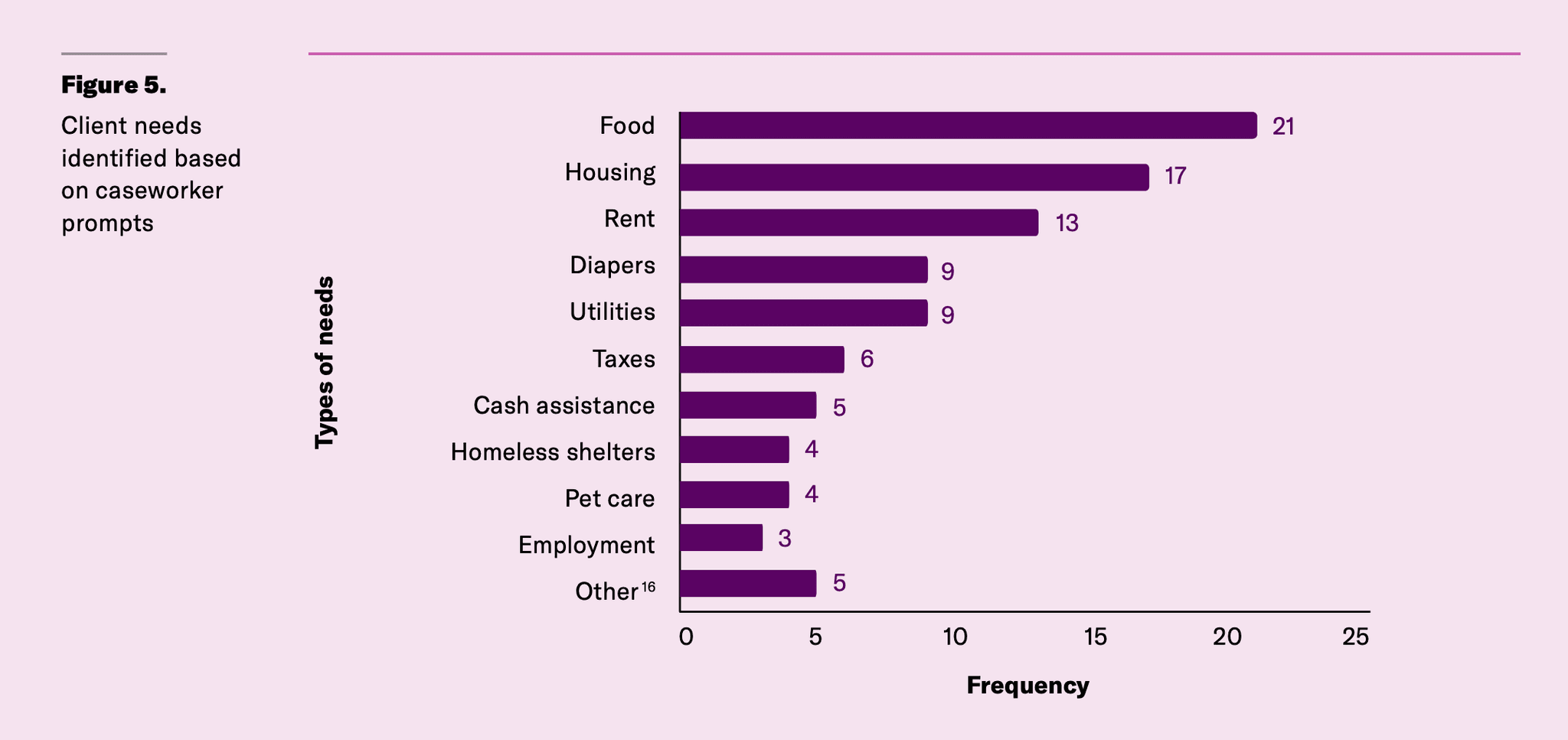

Outcome 3.1: The assistive chatbot offered caseworkers reliable and timely information about a wide range of public benefit programs.

4. Acceptability

Outcome 4.1: 65% of caseworkers with chatbot access reported real-world use.

Outcome 4.2: Several “super users” of the assistive chatbot emerged during the pilot.

Outcome 4.3: The Net Promoter Score suggests a fairly successful pilot with opportunities for improvement.

5. Administrative burden

Outcome 5.1: There is promising evidence that the assistive chatbot may reduce learning and psychological costs, but results were inconclusive due to small sample size and wide variation in experiences.

6. Accessibility

Outcome 6.1: Chatbot responses averaged a 10th to 12th grade reading level.

Outcome 6.2: Chatbot responses are more accessible than source policy manual text.

Outcome 6.3: Multilingual capabilities are enhanced by caseworkers’ language and cultural translation.

Read the full report for details on the outcomes.

Acknowledgements

The report authors would like to acknowledge members of the Nava Labs team: Alicia Benish, Charlie Cheng, Diana Griffin, Foad Green, Genevieve Gaudet, Kanaiza Imbuye, Kasmin Scott, Kevin Boyer, Ryan Hansz, and Yoom Lam for contributing to the development and implementation of the pilot and evaluation plan; Bob Wilkinson, Chloe Hilles, Greg Jordan-Detamore, and Noam Leead for design and communications support; members of the Amplifi team: Jill Bauman, Brit Gilmore, Karen Van Kirk, and Ryan Fendy, for partnering on the real-world pilot implementation and recruiting caseworkers for the advisory council and pilot participation; and the 12 members of the caseworker advisory council and the over 60 caseworkers who participated in the pilot from various human services agencies and nonprofit organizations in Los Angeles and who provided invaluable insights about the experience using the chatbot.

Written by

Partnerships and Evaluation Lead

Director of Partnerships and Advocacy

Associate Research Professor, Georgetown University

Postdoctoral Fellow, Georgetown University

Information Science PhD Student, Cornell University

Assistant Professor of Information Science, Cornell University